Key points from this report:

- Facebook created 108 pages for Islamic State as well as dozens of pages for other terrorist groups including Al Qaeda, TTP found.

- These pages were automatically generated by Facebook when a user listed a terrorist group in their profile or “checked in” to a terrorist group.

- Facebook generated these pages even though it bans Islamic State and Al Qaeda and says its technology is specially trained to detect them.

- Some of these pages have lingered on the platform for years, accumulating “likes” and posts with terrorist imagery.

- Because Facebook is creating, not simply hosting, these pages, the company could potentially be held liable for them.

Facebook has generated more than a hundred pages for U.S.-designated terrorist groups like Islamic State and Al Qaeda, giving greater visibility to organizations involved in real-world violence, according to a new Tech Transparency Project (TTP) investigation.

This is part of a quirk in how Facebook runs its platform: If a user lists an employer, school, or location in their profile—or checks into a “place”—that does not have an existing page, Facebook automatically creates a page for that term, even if it’s the name of a terrorist group.

Facebook is generating these pages even though it bans Islamic State, Al Qaeda, and other U.S.-designated foreign terrorist organizations under its Dangerous Individuals and Organizations policy, and has said its technology is specially trained to detect Islamic State, Al Qaeda, and their affiliates. In some cases, Facebook is allowing these pages to linger on its platform for years, accumulating “likes” and posts with terrorist imagery, TTP found.

TTP’s investigation adds to growing questions about how the major tech platforms facilitate terrorist organizing and recruitment.

Under a key internet law, Section 230 of the Communications Decency Act, tech companies are not legally responsible for what users post on their platforms. But the Supreme Court is due to hear two cases this month that challenge this liability protection when it comes to terrorism.

In Gonzalez v. Google, the court will consider a lawsuit brought by the family of an American woman killed in an Islamic State attack in Paris. The suit claims that YouTube (owned by Google) aided ISIS recruitment by recommending Islamic State videos to users, in violation of the U.S. Anti-Terrorism Act. As part of the lawsuit, the family argues that YouTube’s recommendations are not protected by Section 230.

The other Supreme Court case, Twitter v. Taamneh, will consider whether Twitter, Facebook, and Google, by allowing Islamic State to use their sites, can be held liable for aiding and abetting the group's growth, regardless of Section 230.

Facebook’s practice of auto-generating terrorist pages raises similar issues that could pose legal challenges for the company in the years ahead. Because Facebook itself is creating these pages—and is not simply hosting them on its platform—the company may not be able to rely on Section 230 to shield it from lawsuits over the content.

TTP’s findings come as Facebook parent company Meta takes on the role of chair of the Global Internet Forum to Counter Terrorism (GIFCT). The GIFCT is a non-profit organization whose mission is “To prevent terrorists and violent extremists from exploiting digital platforms.” But our investigation shows that Facebook’s ongoing auto-generation of terrorist pages is actively undermining that goal.

‘ISIS training camp’

Facebook has had ample warnings over the years about its auto-generation of pages for extremists. A whistleblower petition to the Securities and Exchange Commission flagged the issue in 2019 (followed by an update that listed nearly 200 auto-generated pages for Islamic State-related groups.) Since 2020, TTP has published several reports highlighting Facebook’s auto-generation of pages for white supremacists, militia, and terrorist groups.

TTP’s new investigation goes deeper on Facebook’s creation of terrorist pages. Our research identified 108 auto-generated pages for groups or terms affiliated with the Islamic State and its regional offshoots. The group is alternatively known as Islamic State of Iraq and Syria (ISIS), Islamic State in Iraq and the Levant (ISIL), and Daesh.

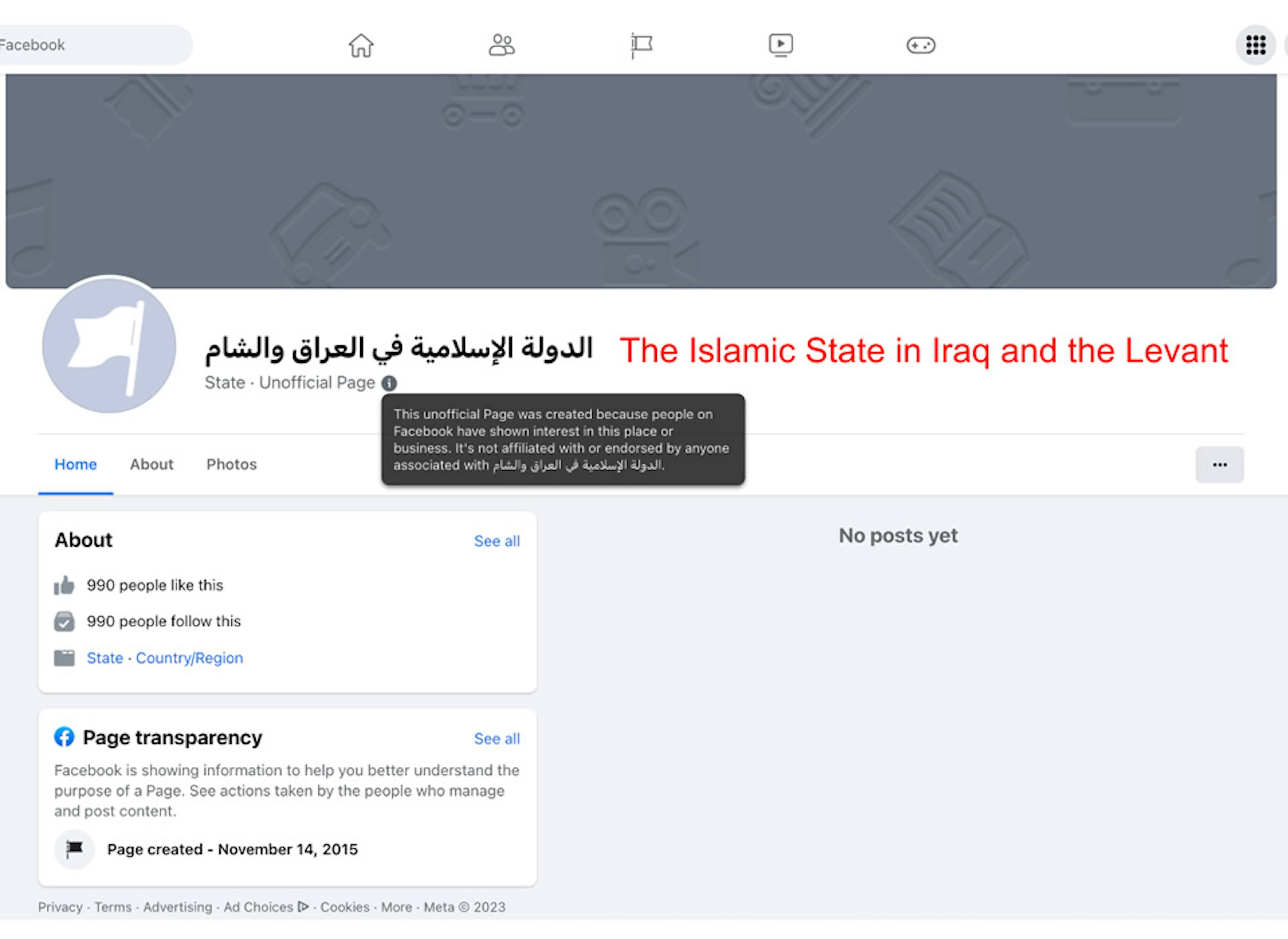

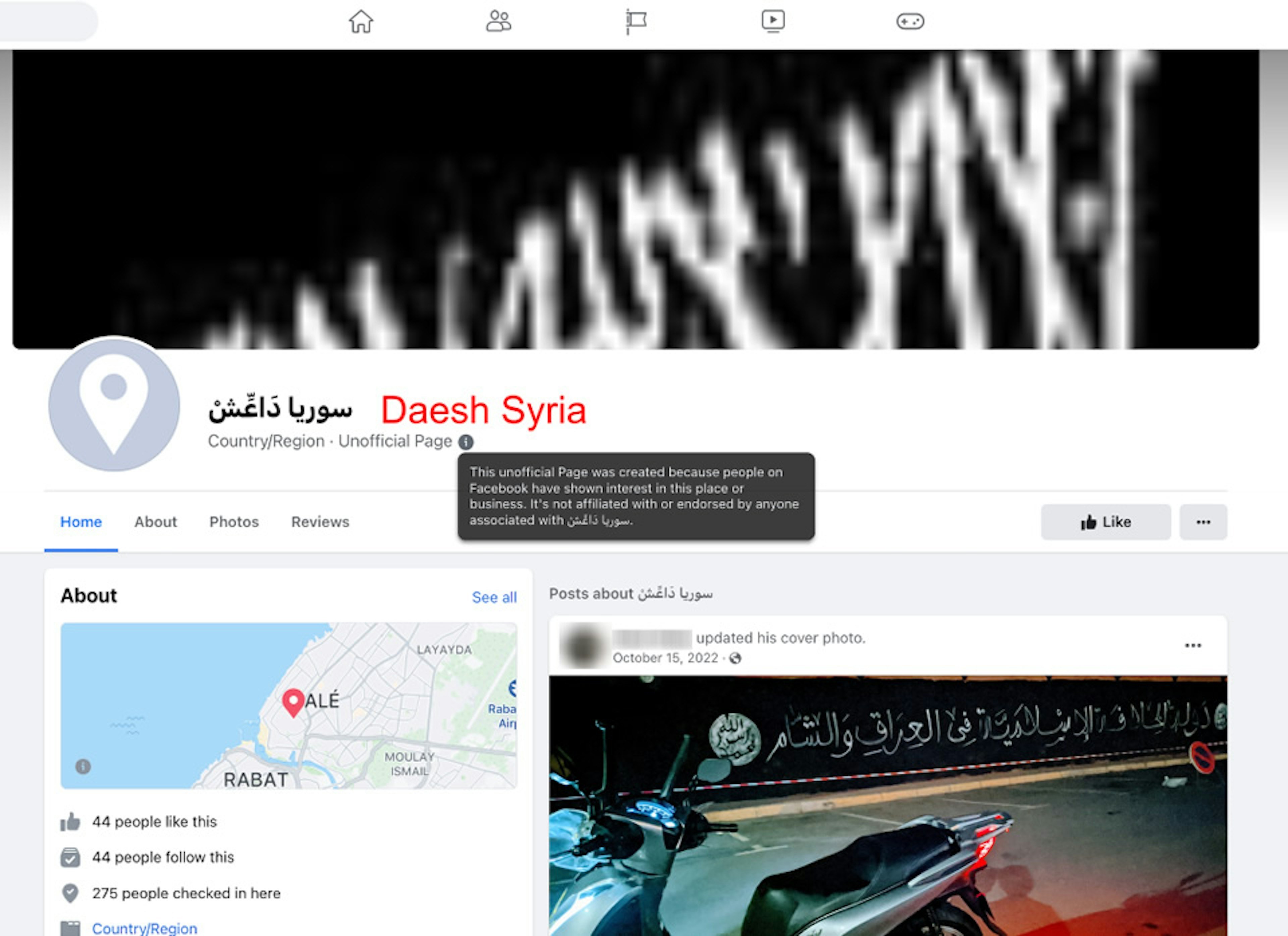

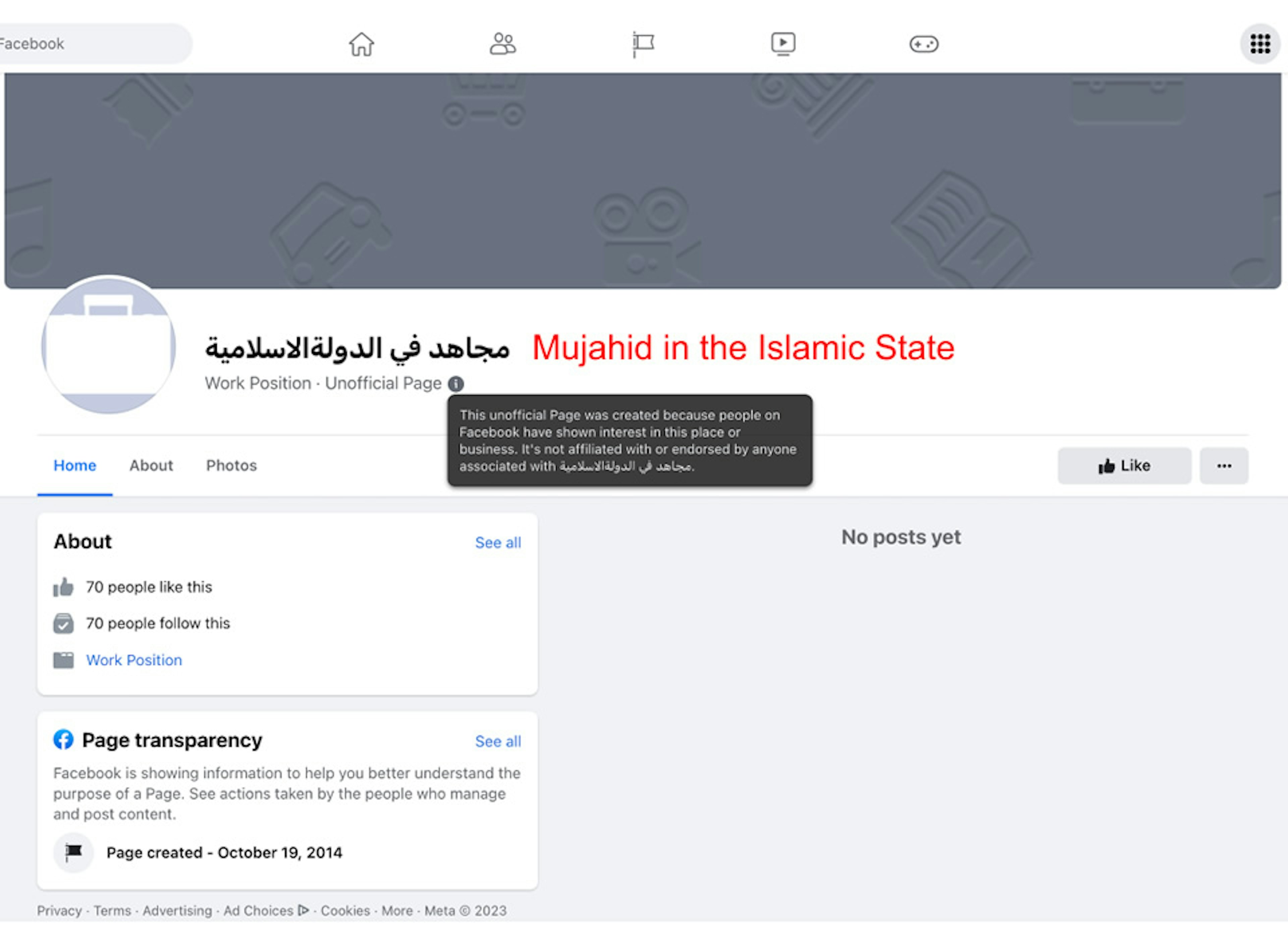

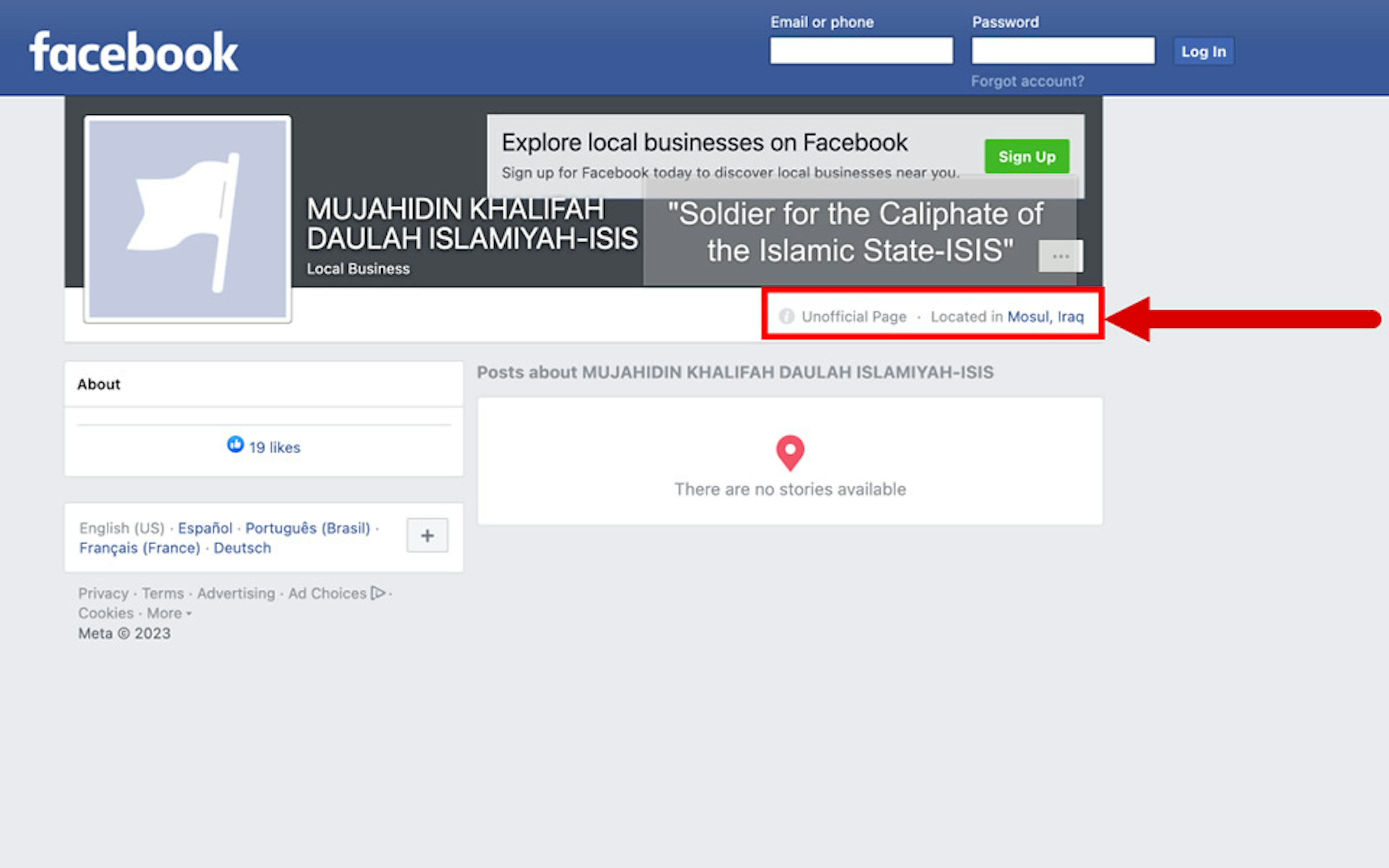

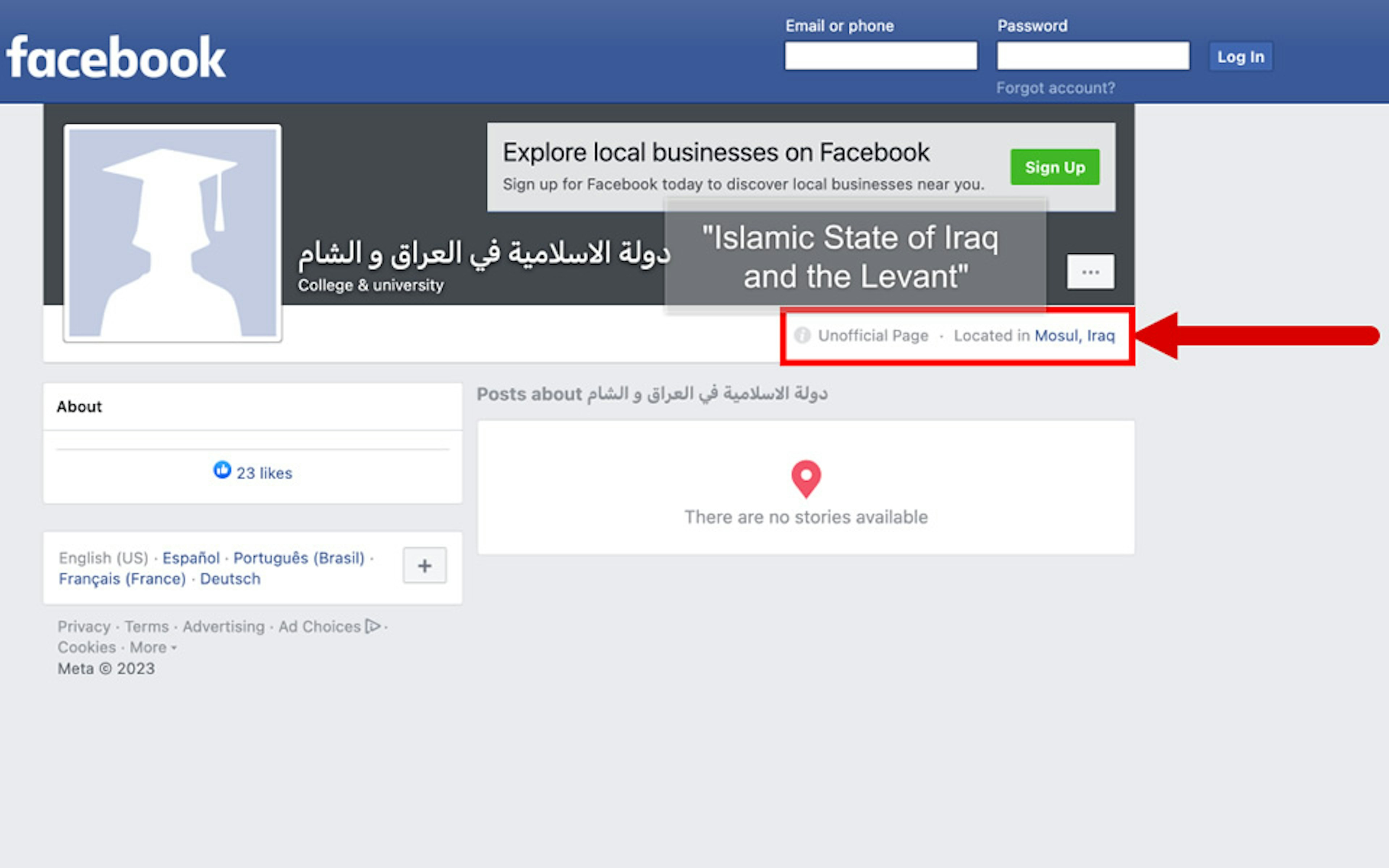

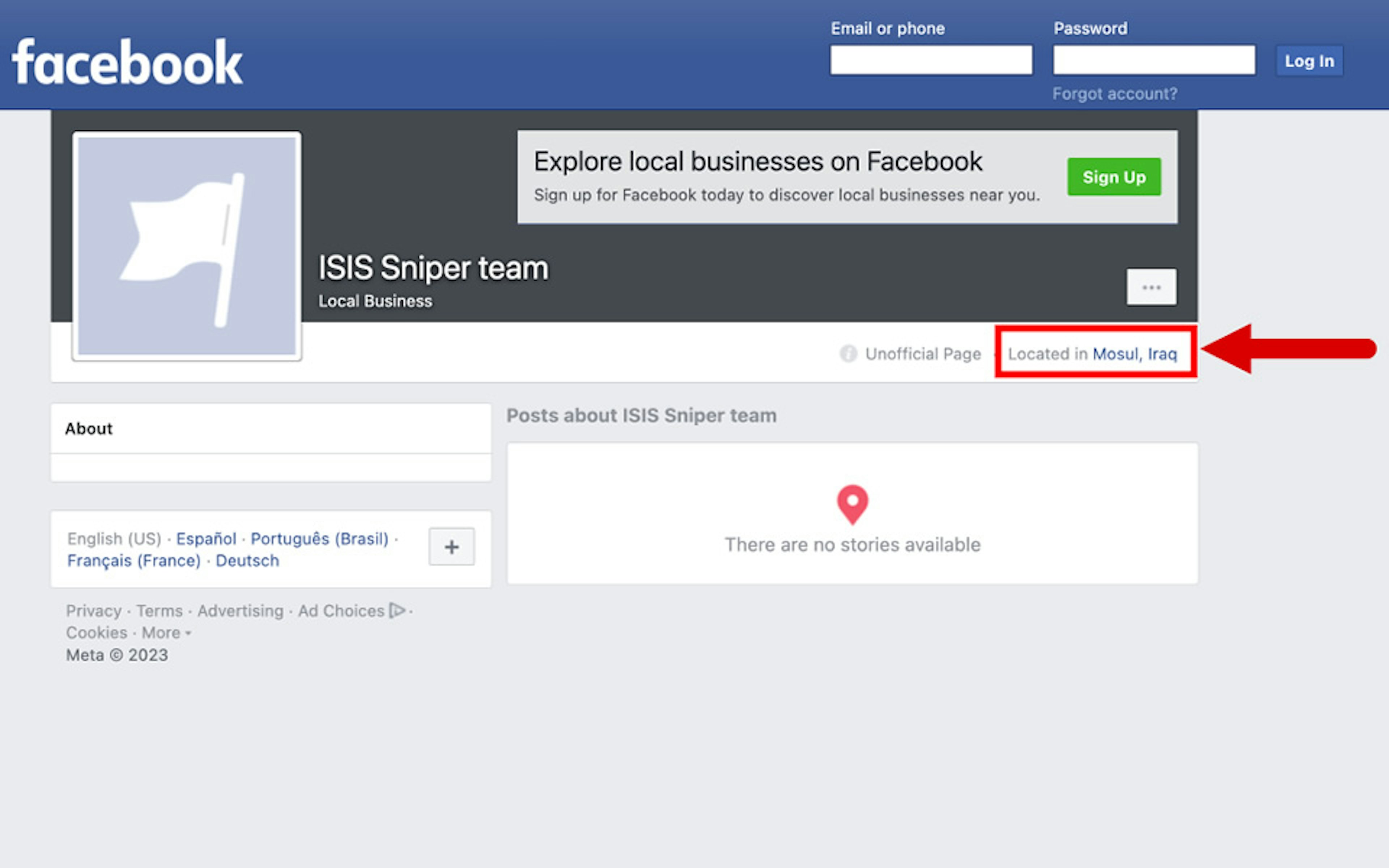

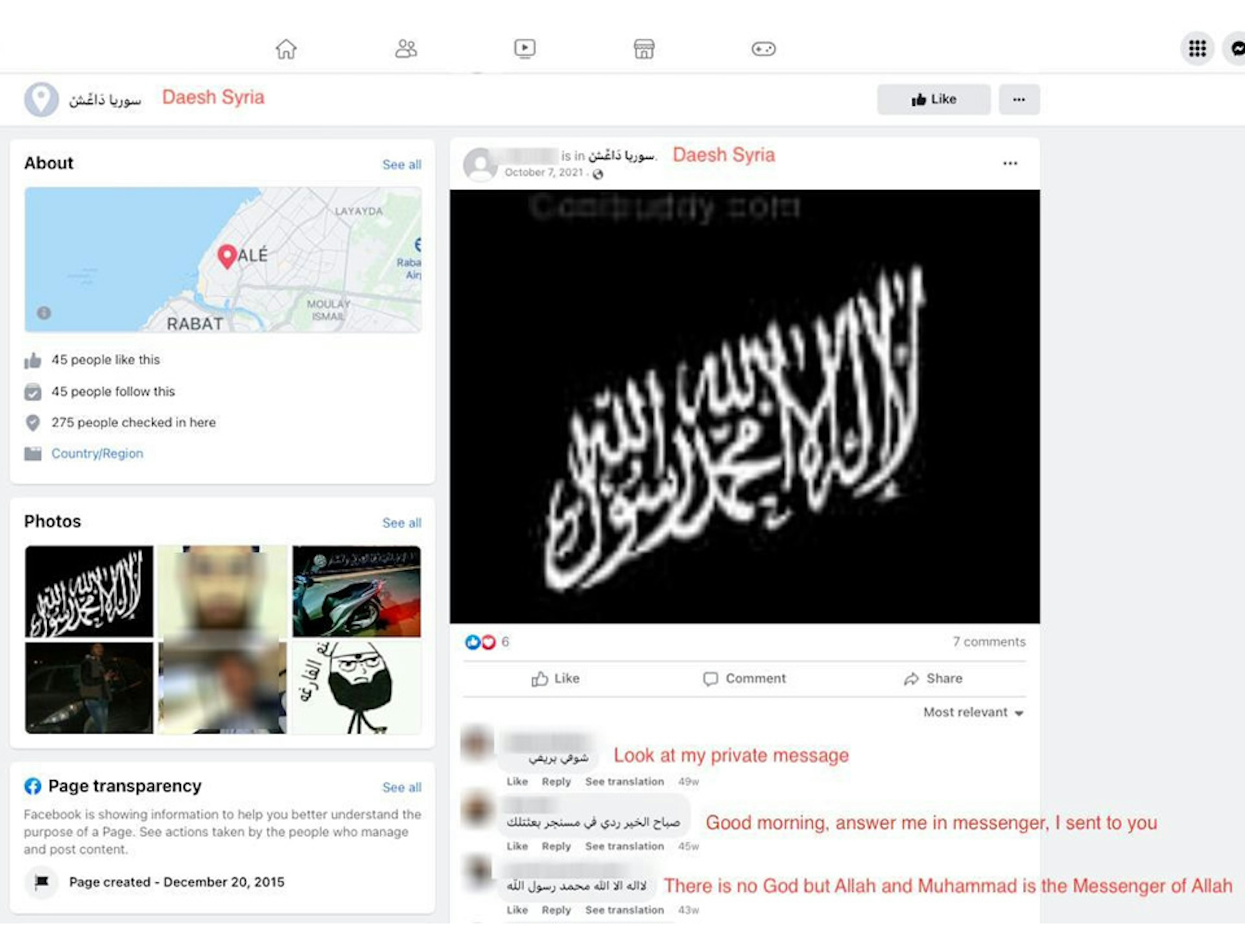

The auto-generated pages identified by TTP include “الدولة الإسلامية في العراق والشام” (“The Islamic State in Iraq and the Levant”), “سوريا دَاعِّشْ” (“Daesh Syria”), “مجاهد في الدولةالاسلامية” (“Mujahid in the Islamic State”), and “ISIS training camp.”

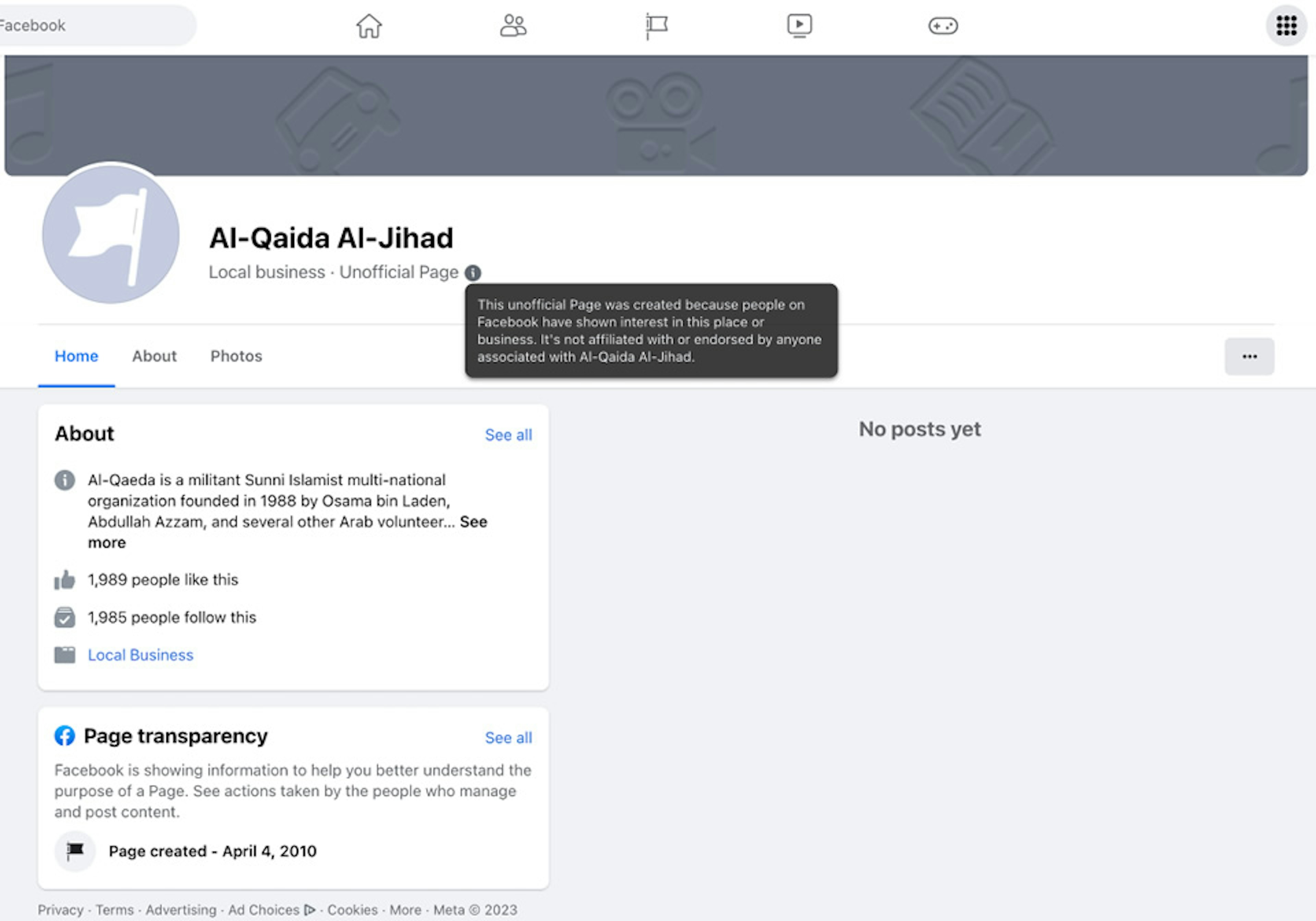

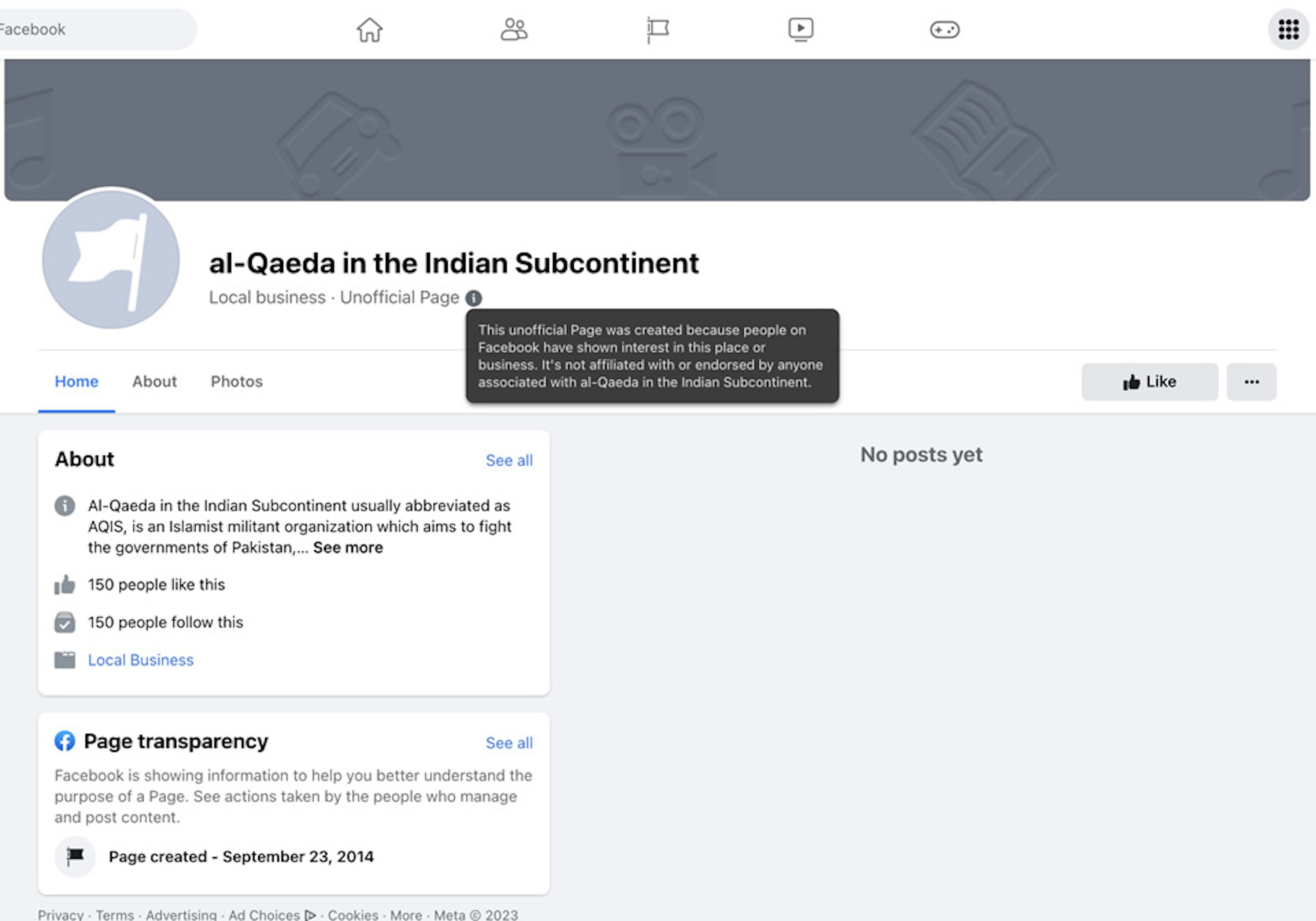

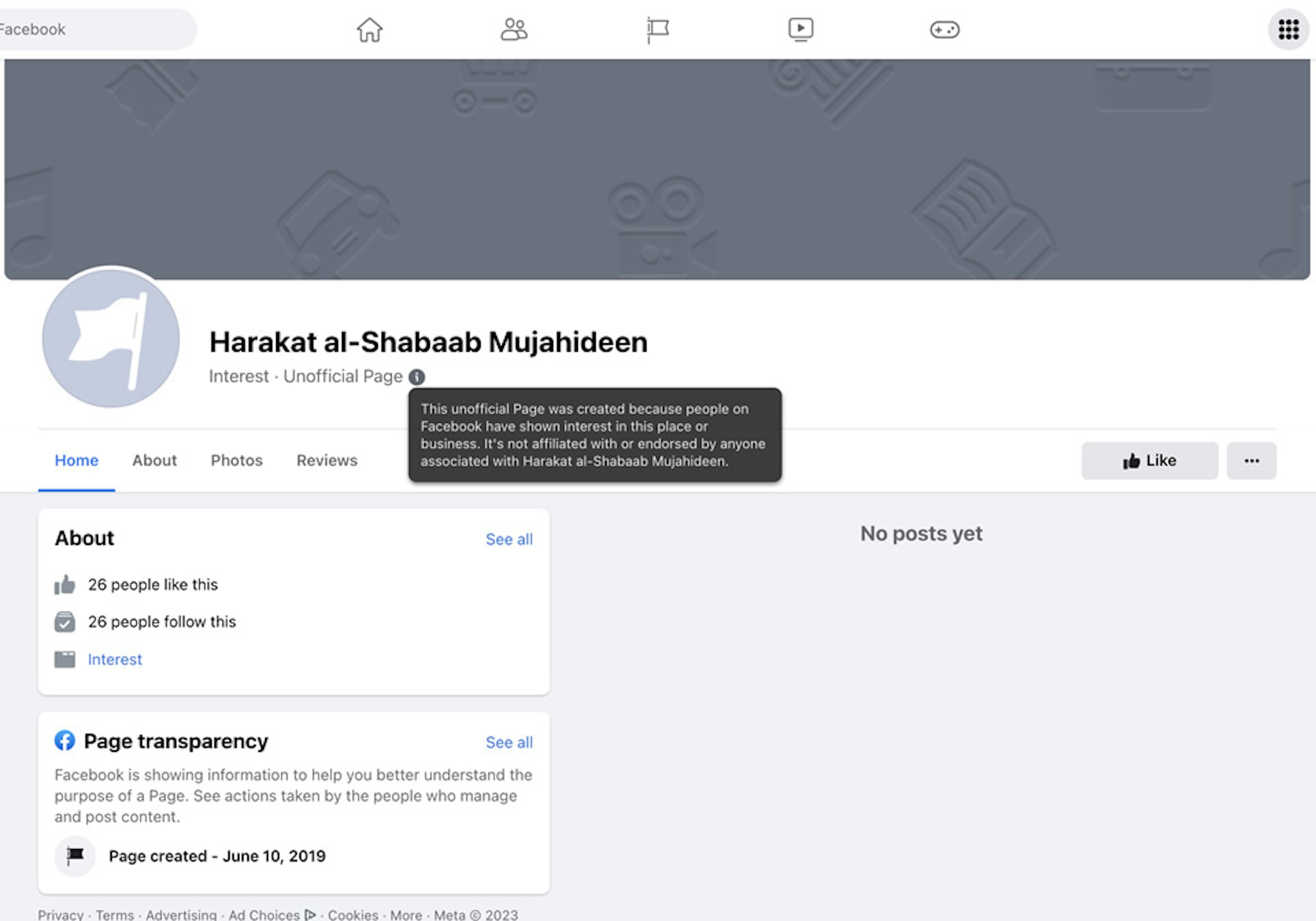

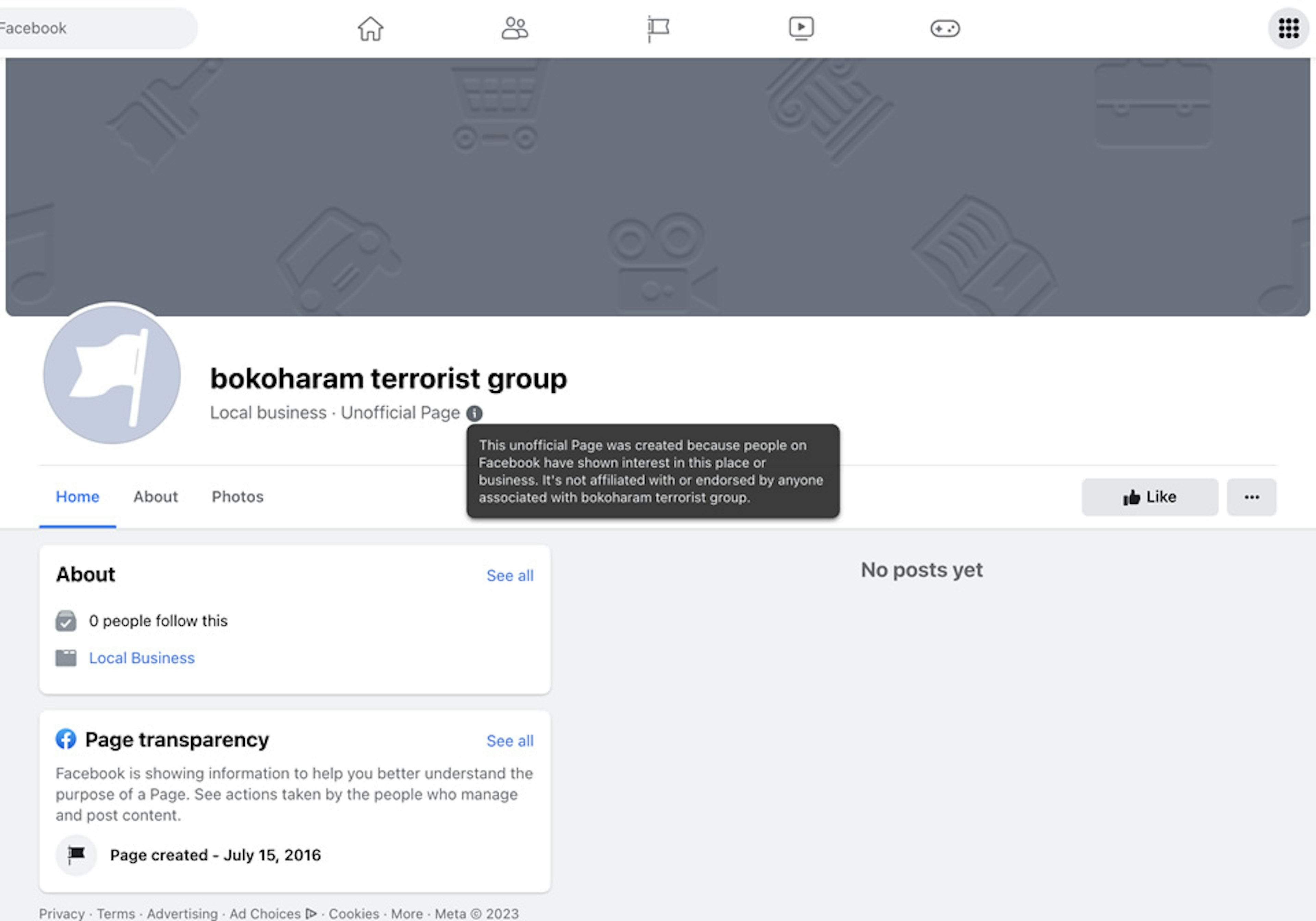

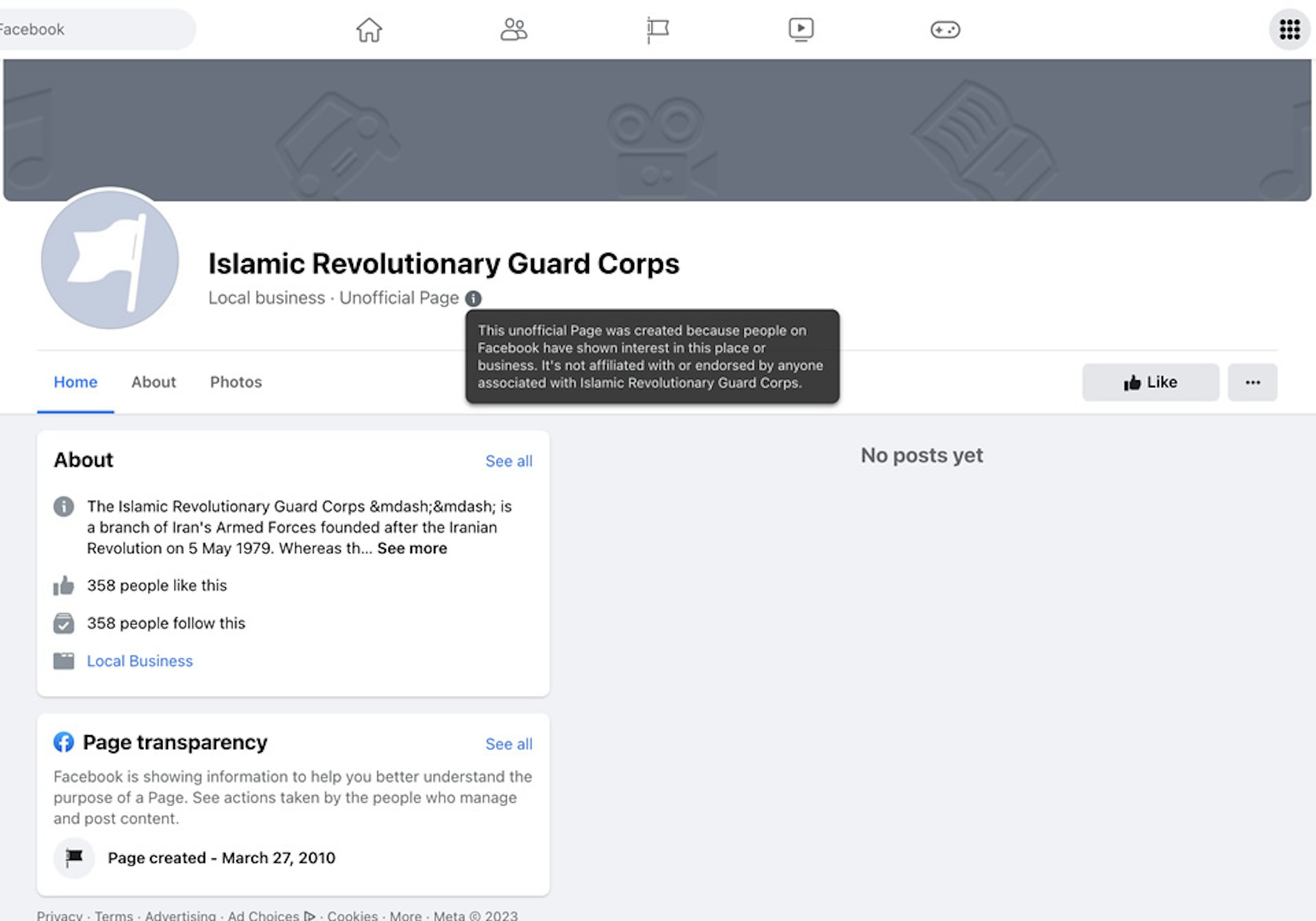

We identified additional auto-generated pages for groups and terms affiliated with Al Qaeda, Al Shabaab, Boko Haram, and Iran’s Islamic Revolutionary Guard Corps—which, like ISIS, are U.S.-designated foreign terrorist organizations banned by Facebook. Other auto-generated pages identified during the course of research had names with general terrorist references like “Suicide Bomber Academy.” (All Facebook pages, including auto-generated pages, are publicly accessible to anyone and can be viewed without a Facebook account.)

According to Facebook’s “Dangerous Individuals and Organizations” policy, U.S.-designated foreign terrorist groups are subject to “most extensive enforcement” because they have the “most direct ties to offline harm.”

In April 2018, Facebook even boasted about its enforcement efforts against ISIS and Al Qaeda and said its detection technology focuses specifically on the two groups and their affiliates, which “pose the broadest global threat.”

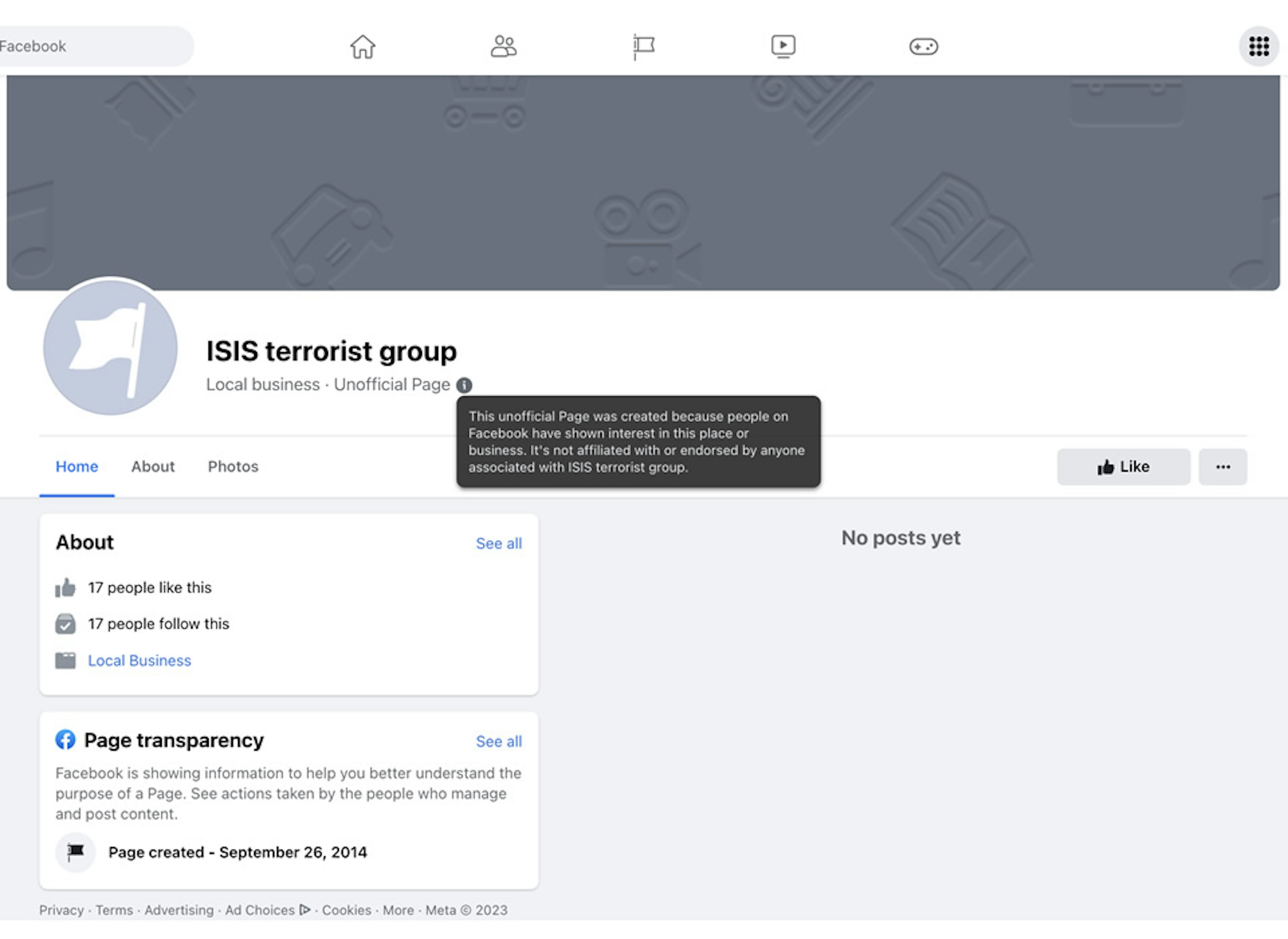

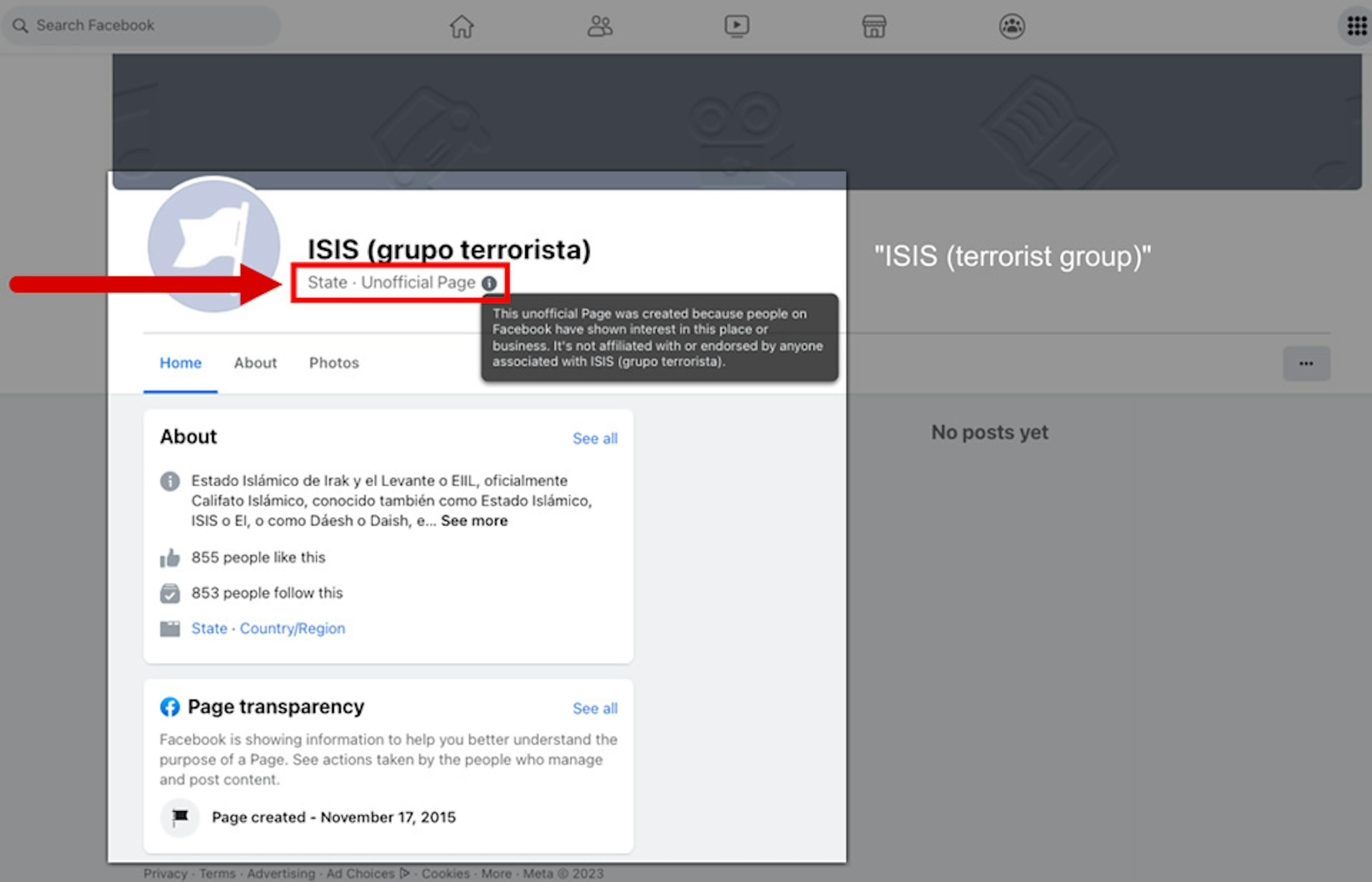

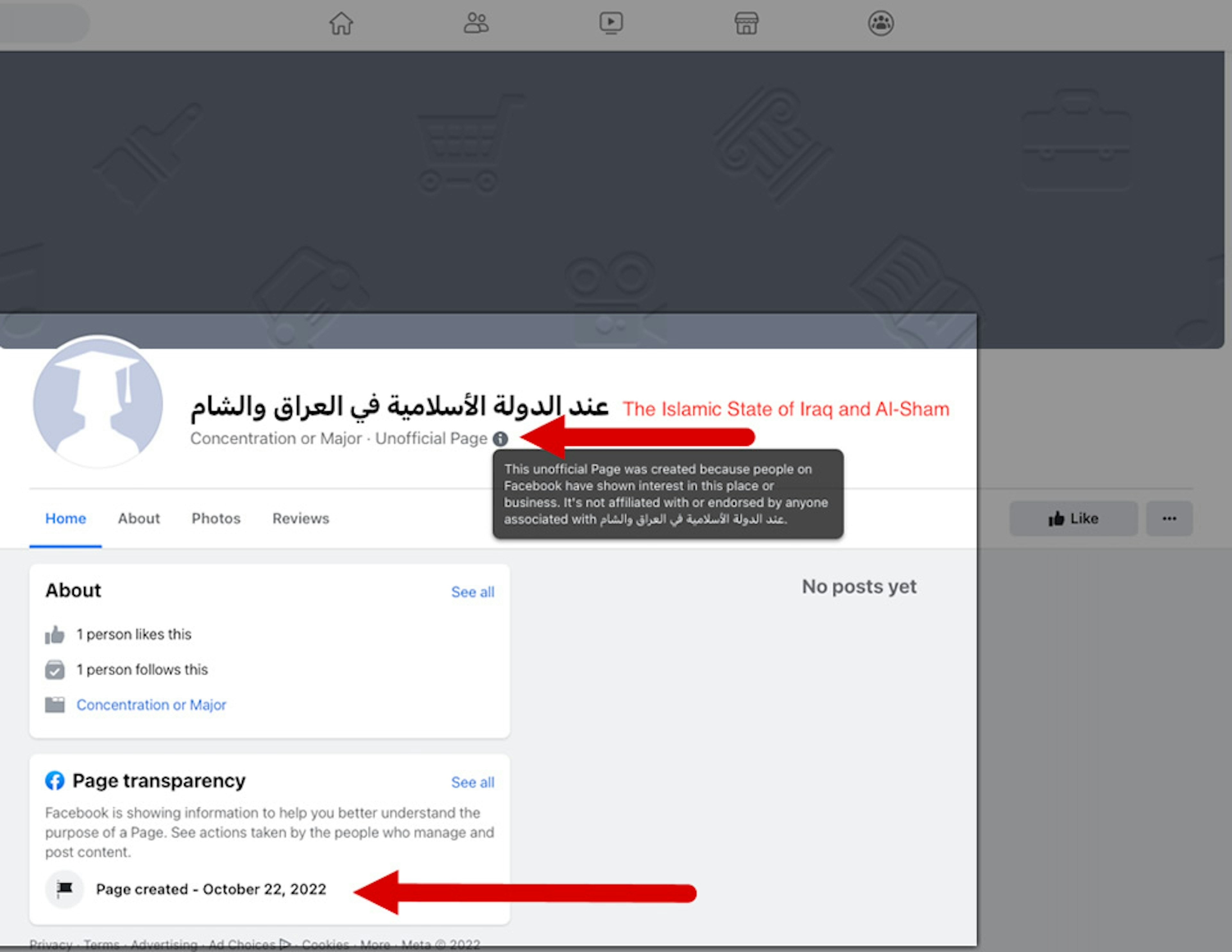

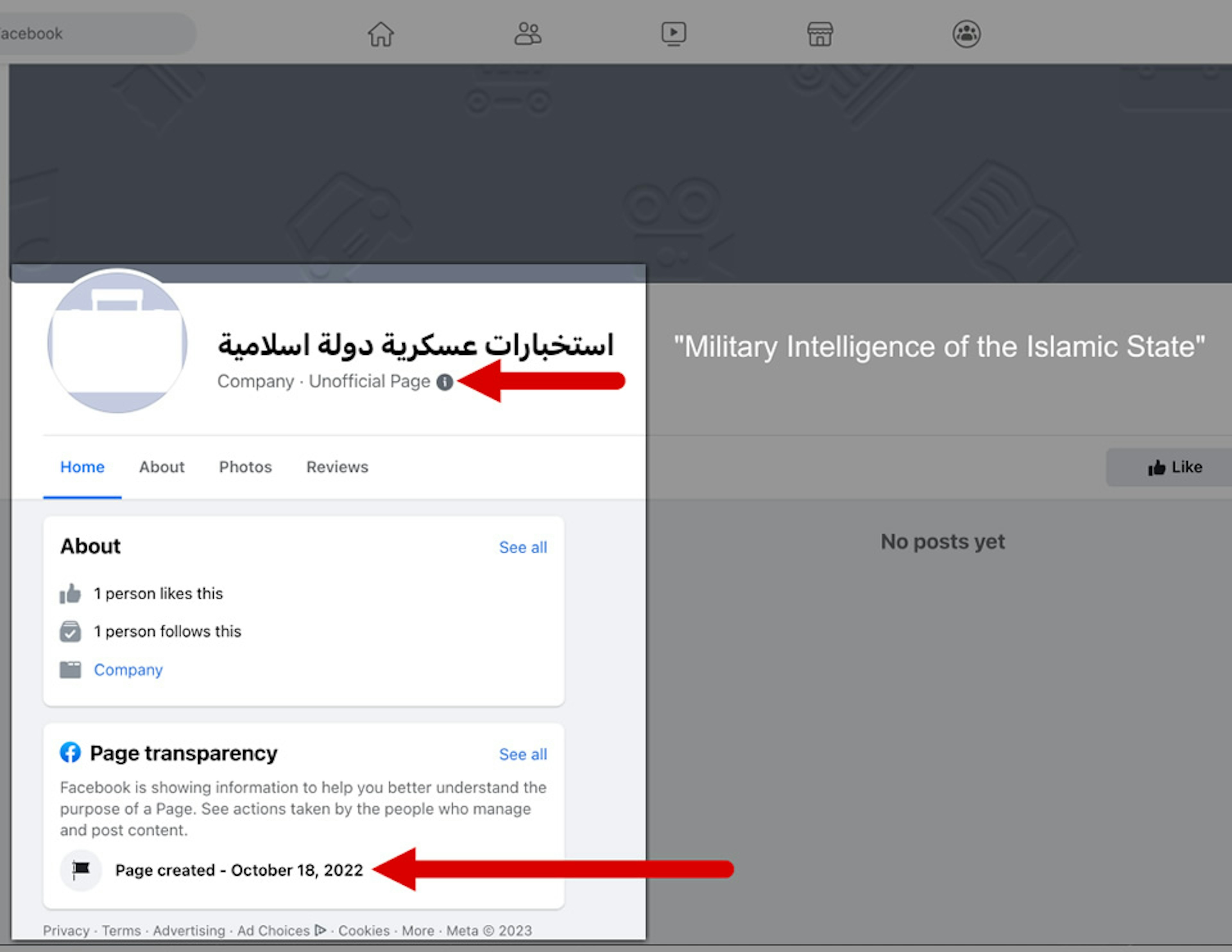

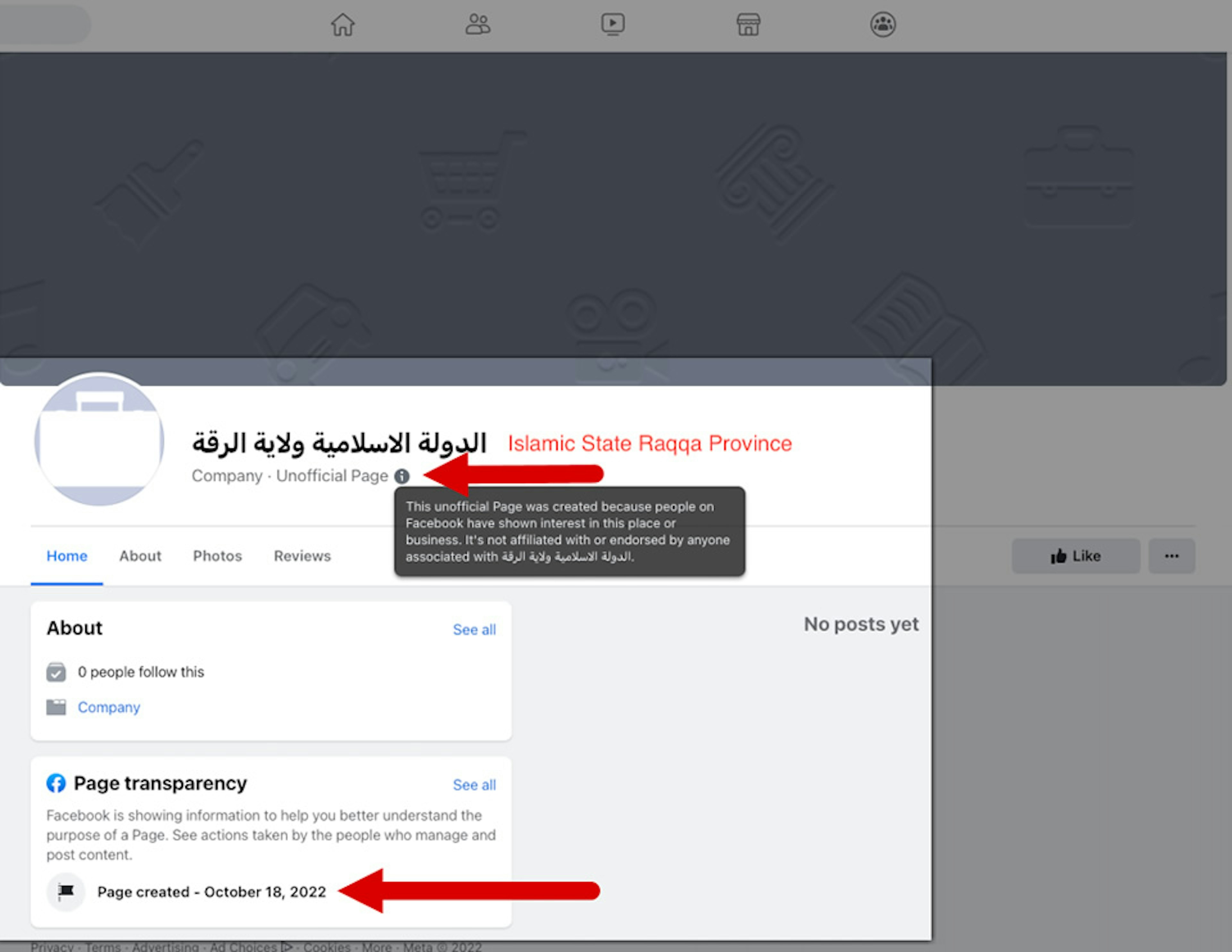

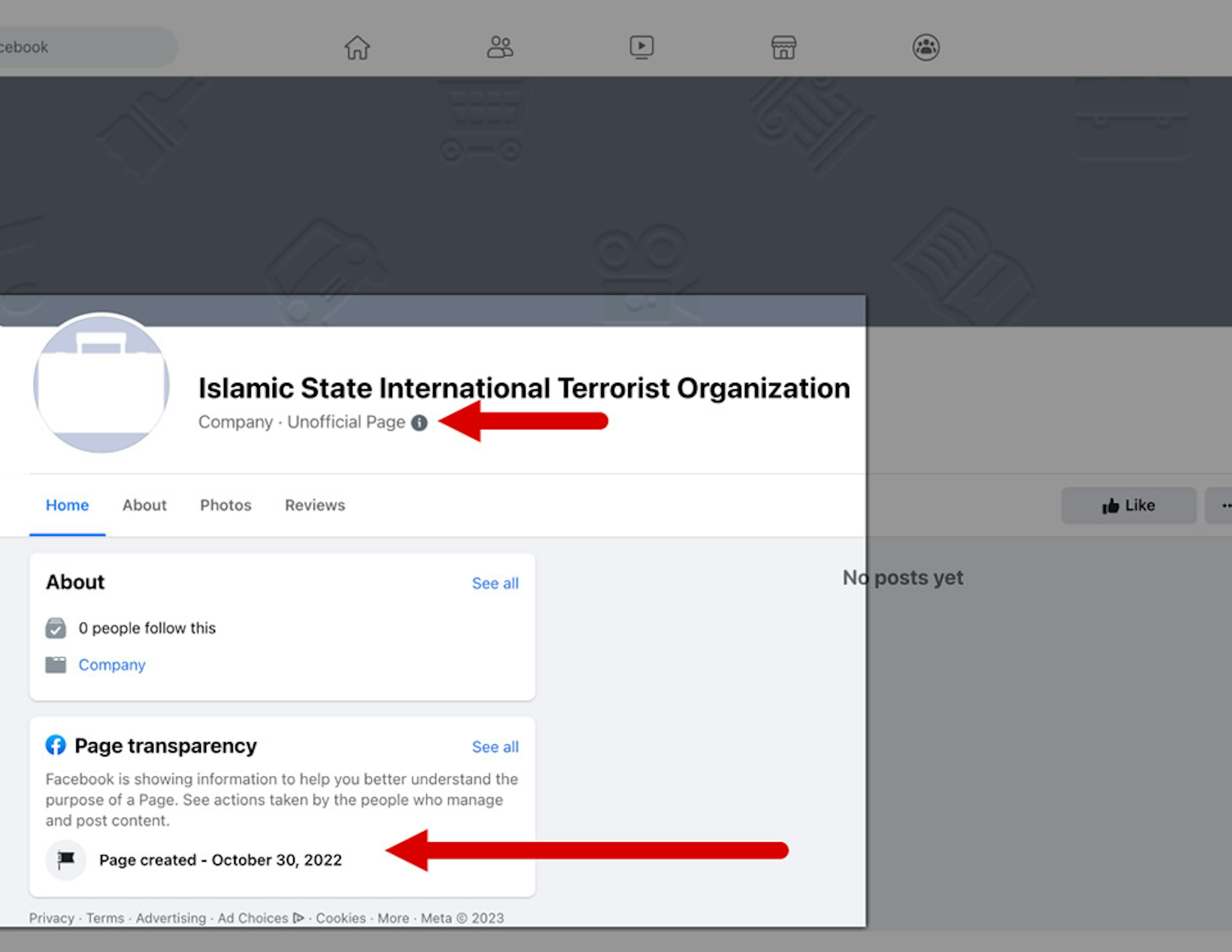

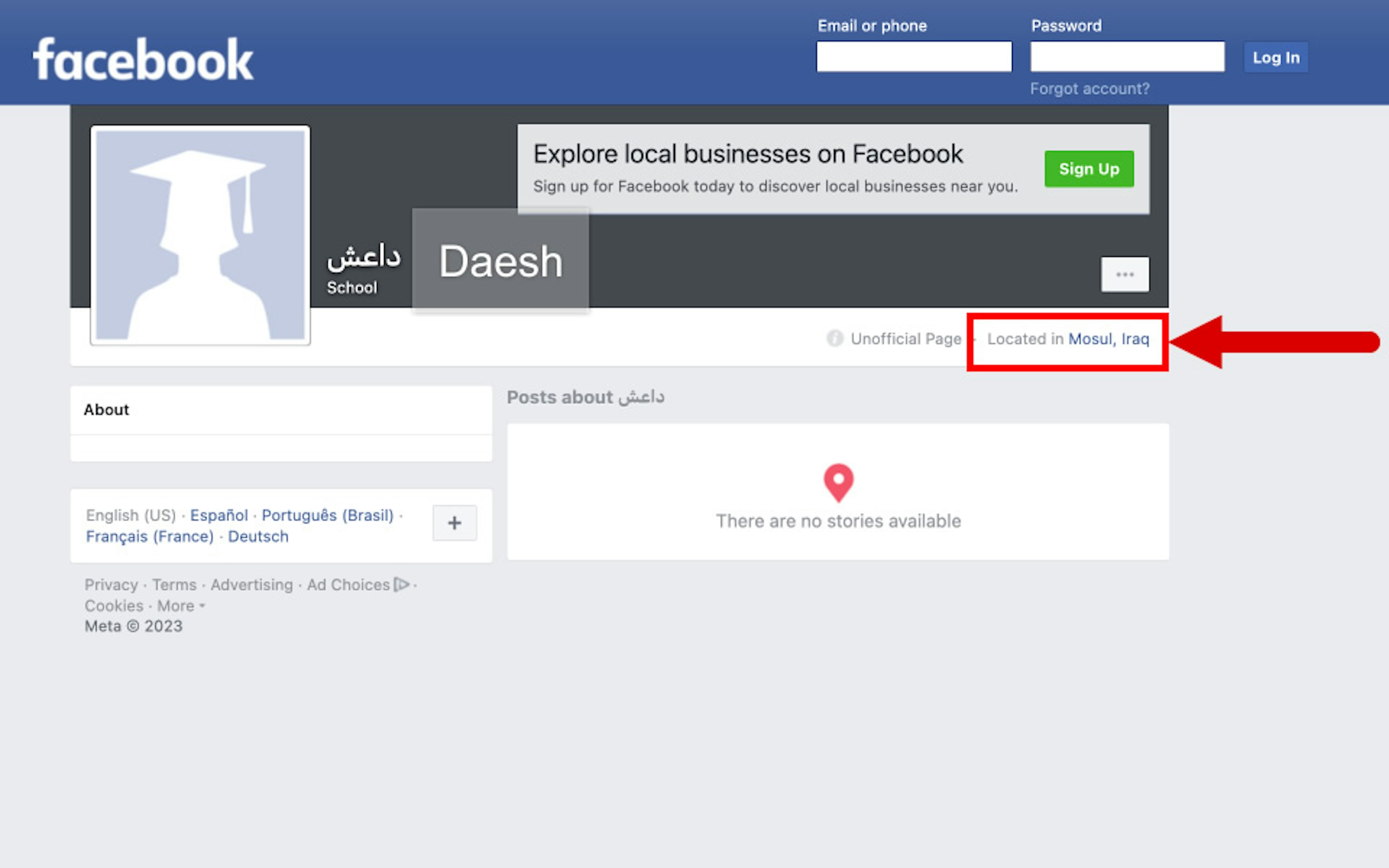

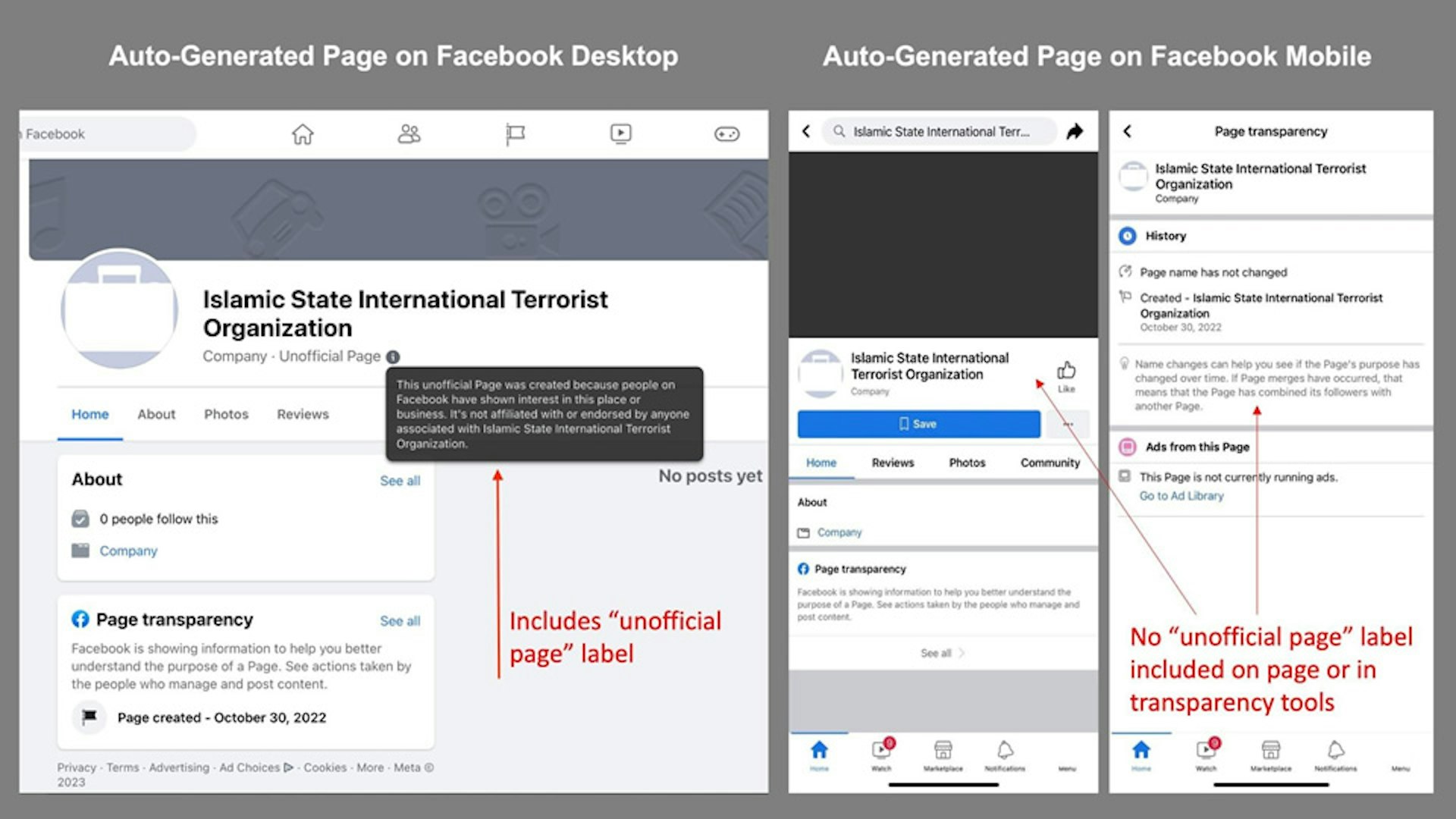

These pages are identifiable as auto-generated via a small, easy-to-miss “Unofficial Page” label, which is only visible on the desktop version of Facebook. Clicking on a tiny information button next to the label produces a pop-up box that states, in part, “This unofficial page was created because people on Facebook have shown interest in this place or business.”

These pages are all assigned a category, like “school,” “local business,” or “interest,” which presumably correspond with information that a user entered into their profile.

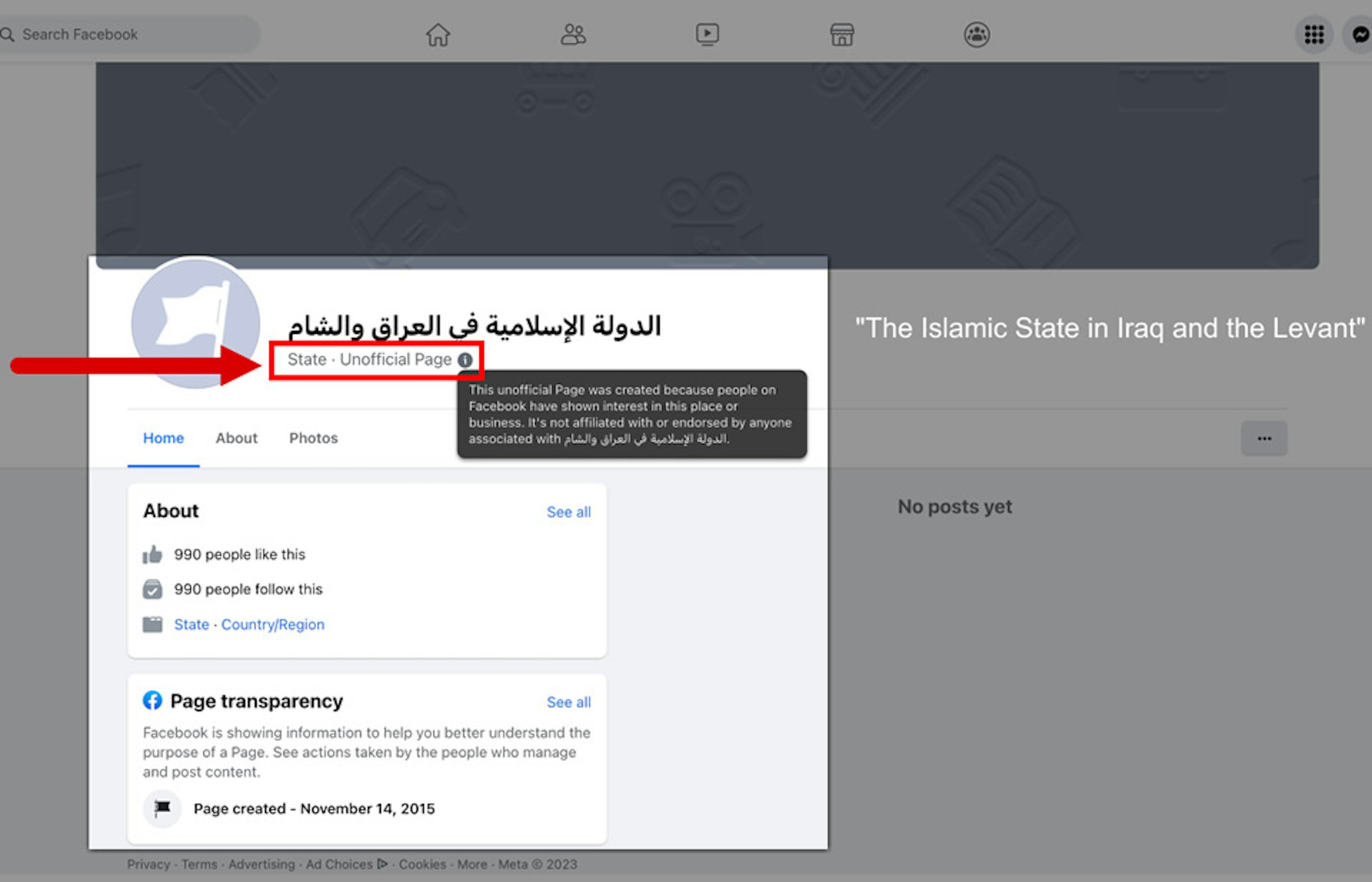

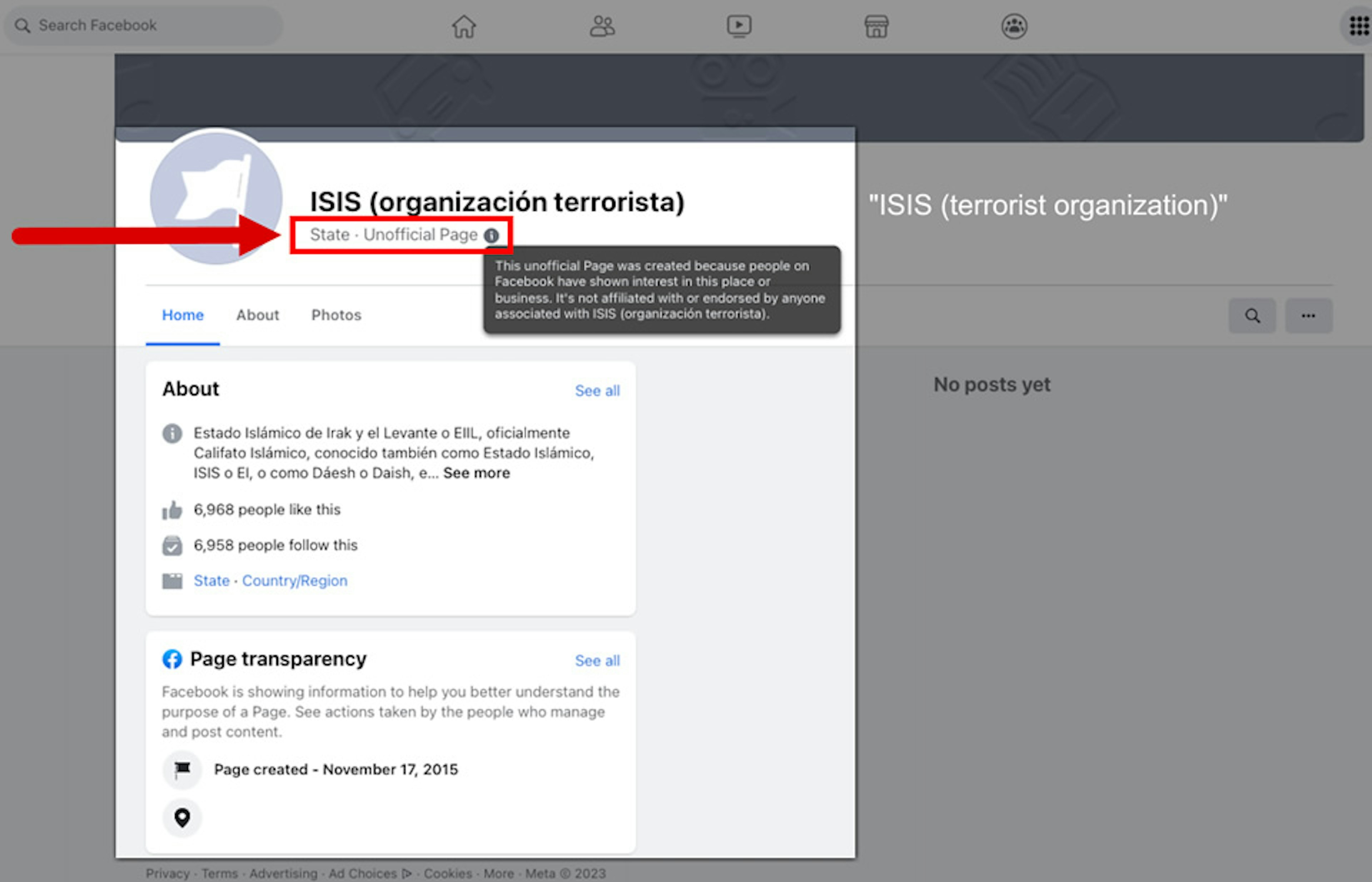

TTP found that Facebook categorized a number of auto-generated ISIS pages as a “country/region” or “state”—a designation that could serve to bolster the group’s goal of establishing an Islamic caliphate.

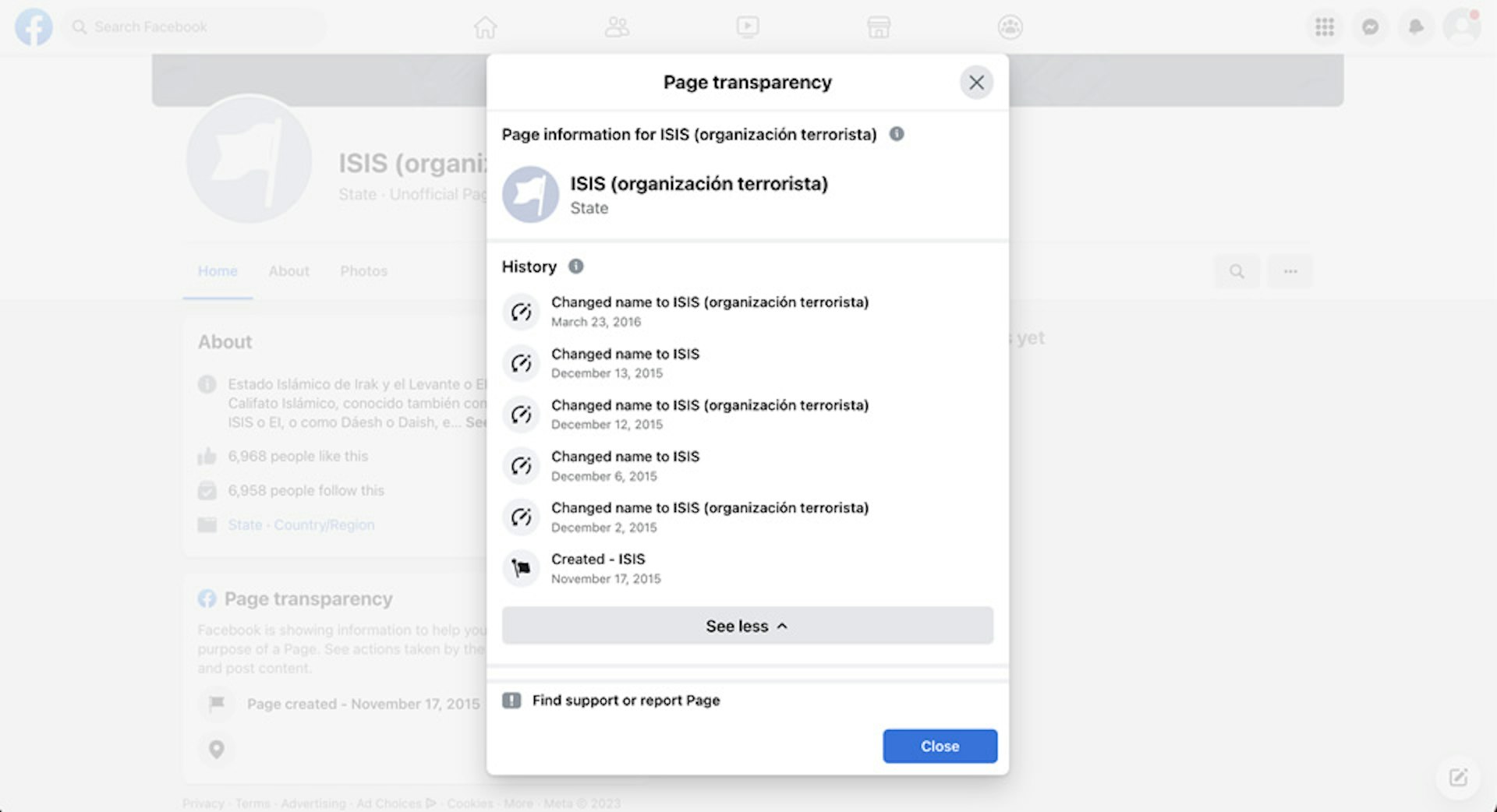

One of the auto-generated “state” pages, “ISIS (organización terrorista),” has nearly 7,000 likes, more than any other page identified by TTP.

The majority of the auto-generated ISIS pages identified by TTP (63) were created between 2014 and 2015 when the group was at the peak of its power. During that period, ISIS declared a caliphate across parts of Syria and Iraq and conducted or inspired a series of attacks around the world.

Despite suffering major territorial losses since then, ISIS still carries out opportunistic military operations, and U.S. officials warn it remains a continuing security threat regionally and globally. But Facebook still hasn’t stopped generating pages for the group. For example, the platform created four ISIS-related pages in October 2022, including “عند الدولة الأسلامية في العراق والشام” (“The Islamic State of Iraq and Al-Sham”) and “استخبارات عسكرية دولة اسلامية” (“Military Intelligence of Islamic State”).

TTP found that more than half (55) of the auto-generated ISIS pages were in Arabic—a language that Facebook has historically struggled to navigate. (Facebook’s content moderation efforts in Arabic have been described as “a disaster.”)

Many of the auto-generated pages gave their location as Iraq and Syria. A total of 14 pages listed an Iraq location, more than any other country in the data set.

For example, one page called “مقاتل لدى الدولة الإسلامية” (“Fighter for the Islamic State”) listed a location in Ramadi, Iraq, a city once under ISIS control. Another four pages gave their location as Mosul, Iraq’s second-largest city that was captured by ISIS militants in June 2014. All of the Mosul pages were created during 2014-2015 when ISIS held the city.

Facebook began offering its free internet connectivity program, Internet.org, in Iraq in December 2015. Under the program, later rebranded as Free Basics, Facebook partners with telecom providers in developing countries to offer a limited set of free internet apps—including Facebook. (The effort has faced a backlash from critics who say it turns the internet into a walled garden of Facebook-approved apps for much of the world’s population.)

Despite Facebook’s efforts to gain a greater foothold in Iraq, however, the platform hasn’t fixed its problem with auto-generated ISIS pages.

Propaganda showcase

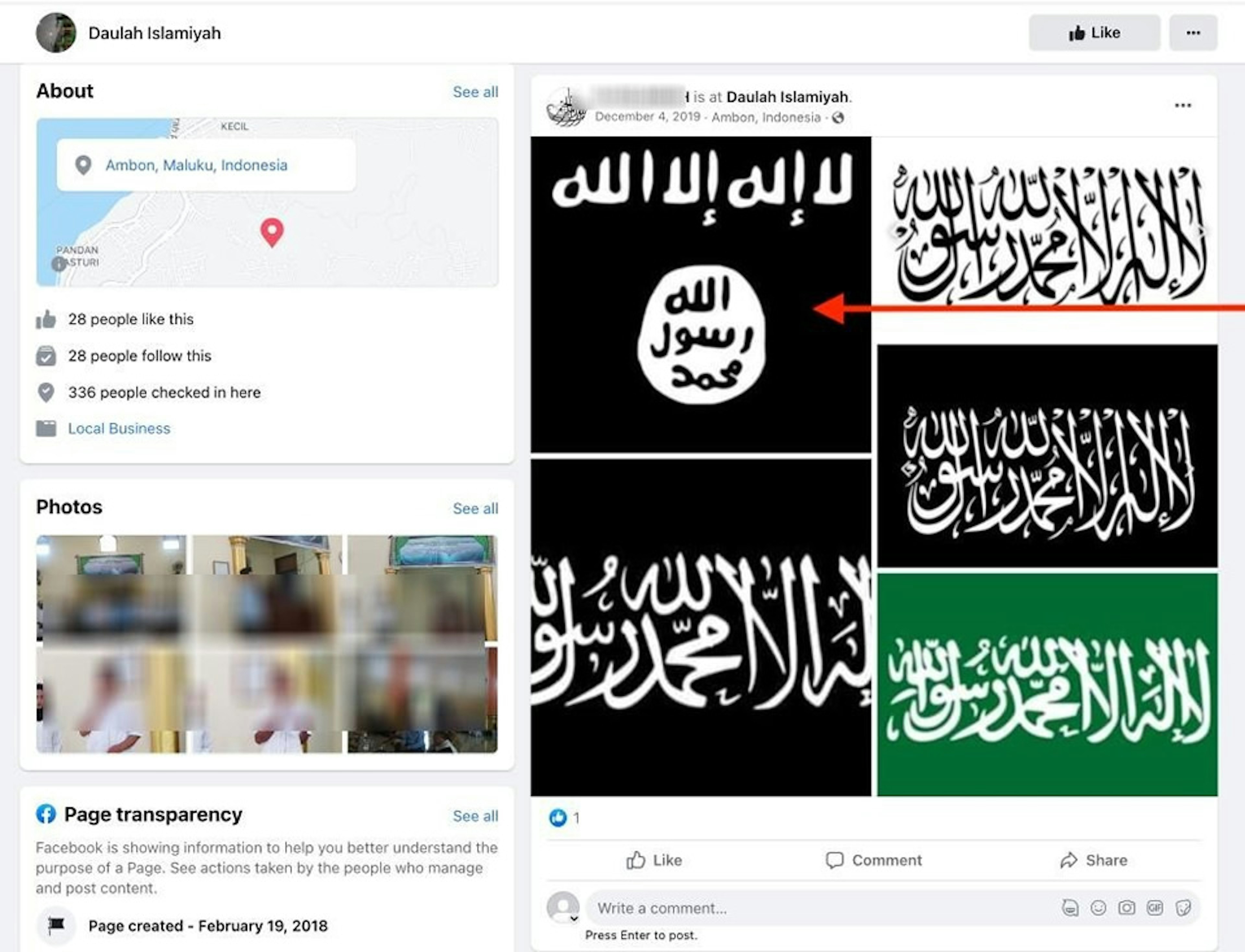

TTP found that some of the auto-generated ISIS pages allowed users to check in, tag friends, and post content—including terrorist-related content.

These were all “places” pages. Facebook created them when someone used its “places” feature to check in to a location that didn’t already have a page. (Facebook launched “places” in August 2010 in what was widely viewed as a rip-off of Foursquare, the then-buzzy check-in app.)

As TTP found, these places pages can serve as messaging vehicles and gathering points for terrorist sympathizers.

On one such page called “Daulah Islamiyah” (Indonesian for “Islamic State”), users posted the Islamic State logo as well as images of the Black Standard of Jihad, which is closely associated with Islamic State, Al Qaeda, and other terrorist groups. More than 330 people have checked in to the page. On the Daesh Syria page mentioned earlier in this report, a user posted the Black Standard of Jihad and received multiple comments from people asking them to get in touch via private message.

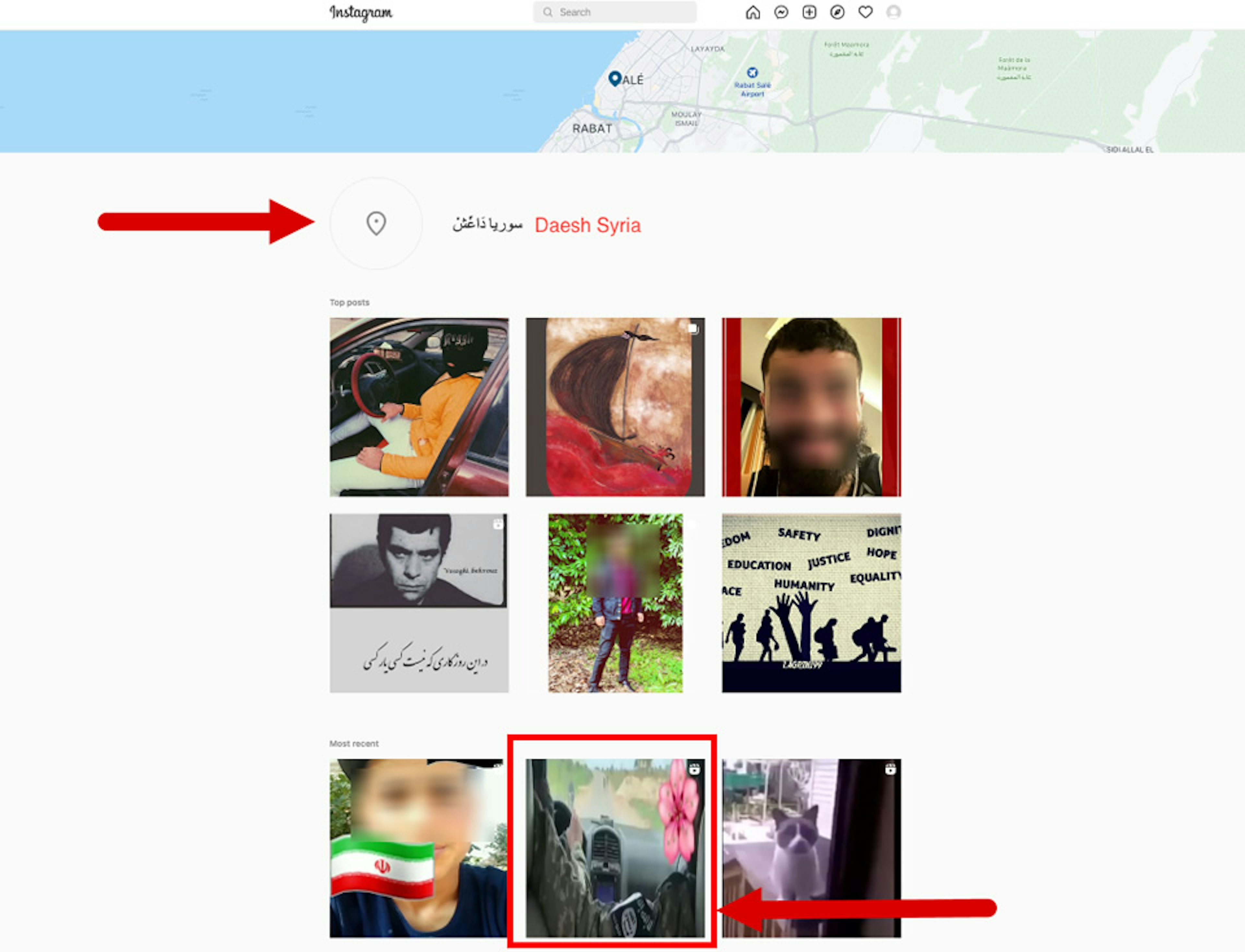

TTP found that nine of these Facebook-generated ISIS places also popped up as taggable locations on Instagram (which is also owned by Meta). These Instagram locations can become vectors for ISIS propaganda as well.

For example, an Instagram location associated with the Daulah Islamiyah Facebook page was tagged by a user in Feb. 2022 with a post calling for the return of the caliphate and warning that enemies of Islam will be destroyed by the mujahideen. Meanwhile, an Instagram location associated with the Daesh Syria page was tagged with what appears to be an ISIS promotional video in May 2022. The video shows two men in military fatigues—one with armband of the ISIS logo—driving a vehicle through unidentified terrain, with hashtags including “جهادی” (“jihadi”) and “مجاهد” (“mujahid”).

(Instagram doesn’t allow users to create locations directly in its app, but if a user checks into a new place on Facebook—it ports over to Instagram as well.)

TTP found that in some cases, Facebook repeatedly modified the names of auto-generated terrorist pages. For example, Facebook’s page transparency tools reveal that a page originally called “ISIS” had its name changed five times between November 2015 and March 2016, eventually becoming “ISIS (organización terrorista).”

It’s not clear why Facebook adjusted the names of these pages rather than deleting them, given their terrorist associations, but the modifications show that Facebook has some awareness that these pages exist—and is still doing nothing about them.

TTP also found that Facebook is not consistent about informing users when a page is auto generated. Auto-generated pages viewed on desktop contain the small “Unofficial Page” label that indicates the content was generated by Facebook. But the same pages, when viewed on a mobile device, include no such labels.

After an August 2022 TTP report about white supremacist content on Facebook, which noted that the platform had removed information labels on auto-generated pages, the labels returned—but only to desktop. Given that the vast majority of Facebook users worldwide access the platform via mobile, this means that most users won’t be flagged to the fact that Facebook itself is creating these pages.

(Facebook has apparently removed several explanatory notes about its auto-generation of pages, but archived versions are still available.)

Conclusion

ISIS has long been known for its heavy reliance on social media to spread propaganda, recruit supporters, and expand its reach. As TTP’s new investigation shows, the group is getting a regular assist from Facebook, which has generated more than 100 pages for the terrorist organization and its regional offshoots.

Despite repeated warnings over the years about this problem, Facebook hasn’t taken any discernible steps to stop generating terrorist pages, which violate its own content policies and raise legal liability questions for the company.

The presence of these pages on Facebook raises new questions about the effectiveness of the platform’s detection technology, which according to Facebook is specially trained to look for ISIS and Al Qaeda content.